CWCloud MCP server and AI agent

You might ask why we're still discussing MCP1 in 2026 when a lot of people repeatedly mention it's no longer useful and that AI agents can easily use the CLI instead of maintaining MCP servers.

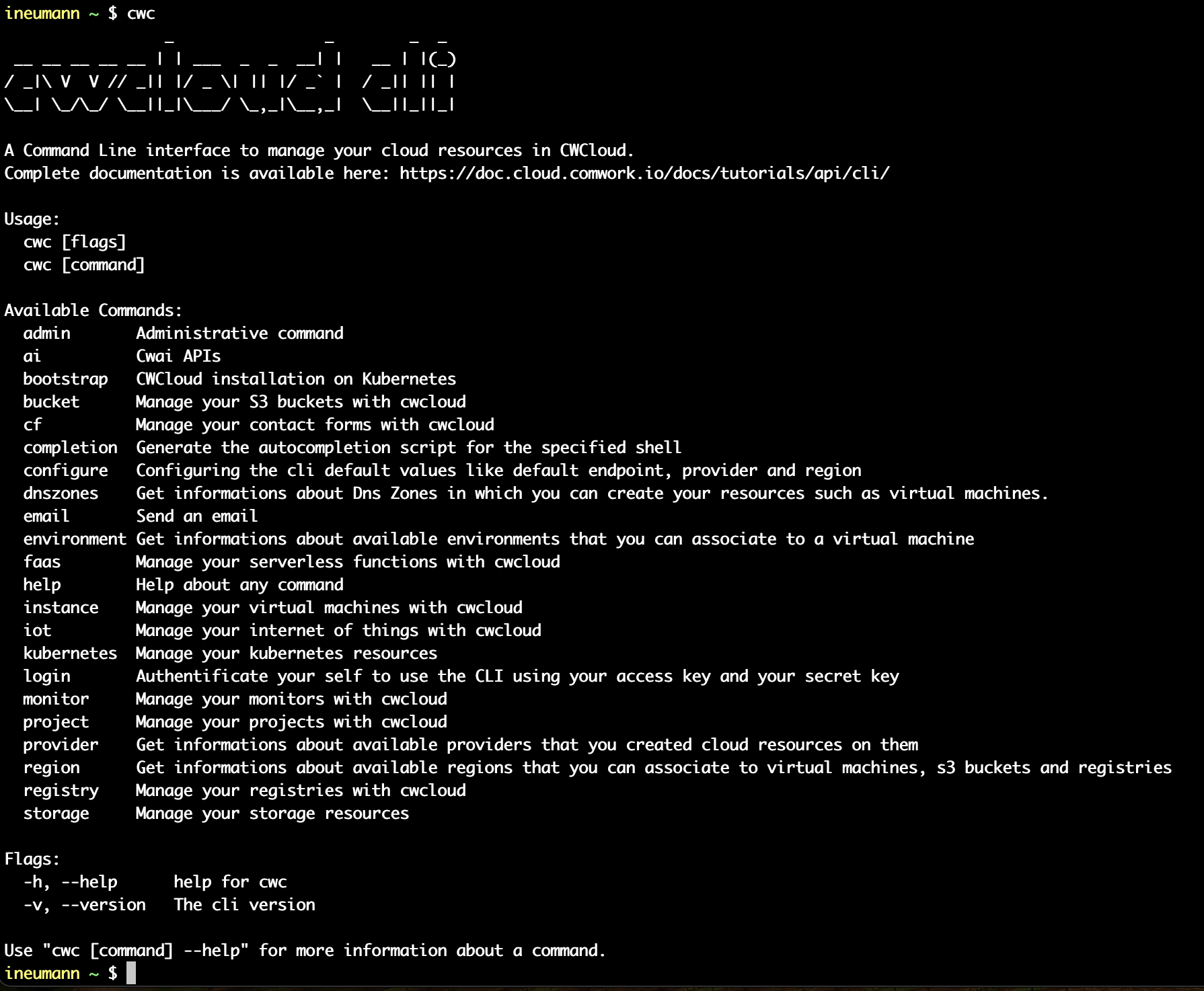

That's the main reason it took us so long to deliver an MCP server: we already maintain the cwc CLI2 and didn't want to duplicate the work by maintaining both a CLI and an MCP server.

Initially, we tried to package all CLI features in Go packages that could be included in the MCP server. However, we quickly realized it was too much work and would require maintaining both artifacts each time we added a new feature.

After a few attempts, we realized we could dynamically generate the MCP server with tool definitions directly from the CLI, which is implemented in Go and Cobra. This way, we could compute all tool definitions from the CLI documentation and generate the MCP server on the fly without maintaining two separate codebases.

Even better: developers can add subcommands and the MCP server will dynamically use them without any extra work.

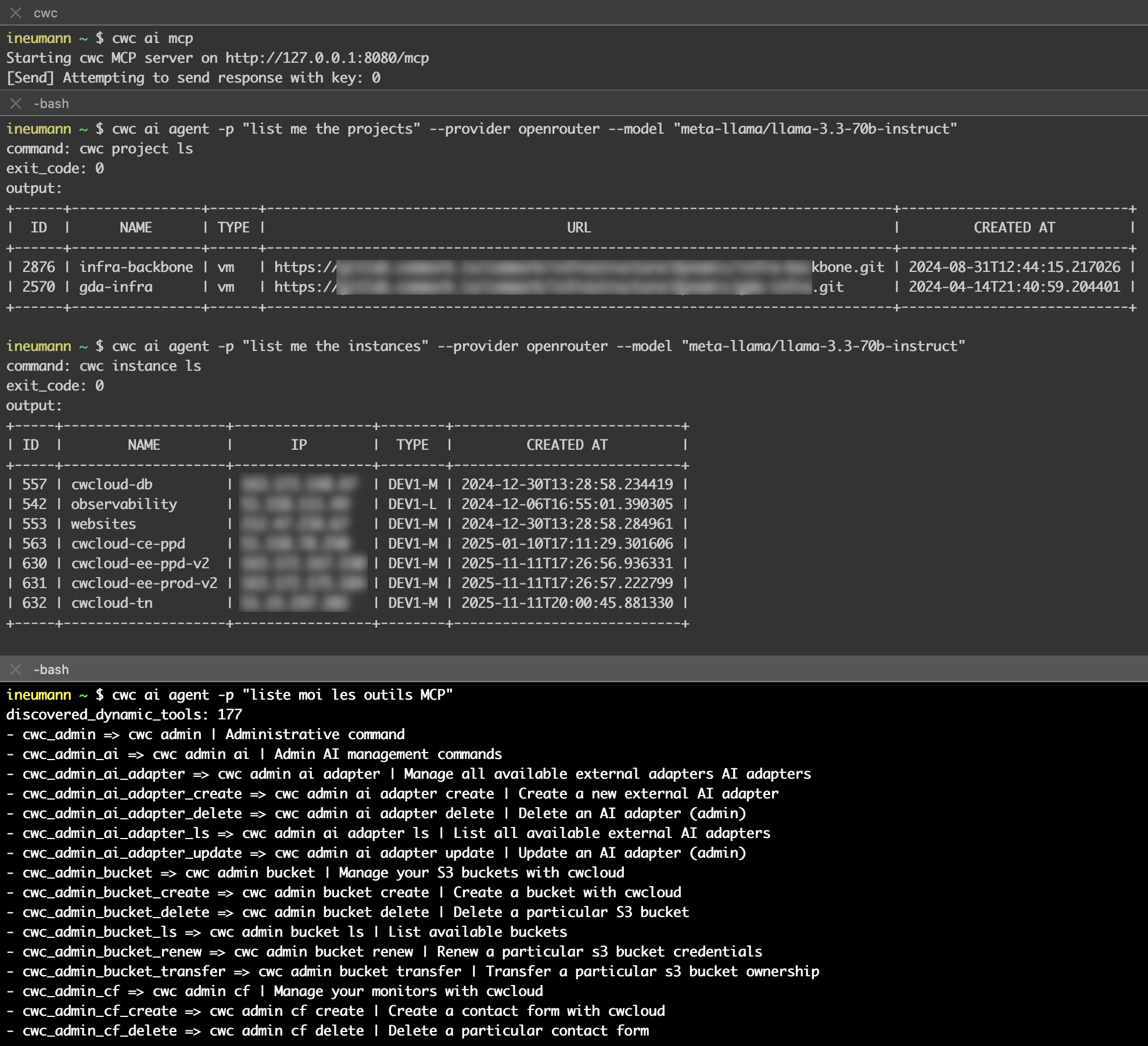

And that's it, you just run this subcommand to start the MCP server:

$ cwc ai mcp

Starting cwc MCP server on http://127.0.0.1:8080/mcp

Of course, you can change the port and listen address like this:

$ cwc ai mcp -p 8081 -l 0.0.0.0

Starting cwc MCP server on http://0.0.0.0:8081/mcp

And we have a tool list_mcp_dynamic_tools that is listing all the available tools on the server.

Now you might ask, "OK, fine, but why should I use the MCP server instead of directly using the CLI with an agent?". In our opinion, they're compatible and can work together. We believe providing MCP tools for your agents requires less effort than implementing a custom agent that calls the CLI and parses its output.

And of course we also now provide a way to create agents that are able to call the MCP server with the cwc ai agent command:

$ cwc ai agent -p "your prompt"

The command works with OpenAI's gpt4omini by default but also supports any models from OpenAI, Anthropic, Google Gemini, Deepseek, or OpenRouter (which provides open-source models from Meta):

$ cwc ai agent -p "your prompt" --provider openrouter --model "meta-llama/llama-3.3-70b-instruct"

Here's a demo on how to use the MCP server with an agent to list the projects and instances and available MCP tools:

Notes:

- You can prompt in other languages like French, as shown in the demo.

- You must expose the MCP server in a separate terminal. This allows you to target a remote MCP server using the

-sflag (we'll add authentication later):cwc ai agent -p "list me the projects" -s "http://127.0.0.1:8081/mcp" - Complete documentation is available here.

Don't forget that the CLI can also update or delete resources like instances, monitors, projects and anything else. So be careful with your prompts !

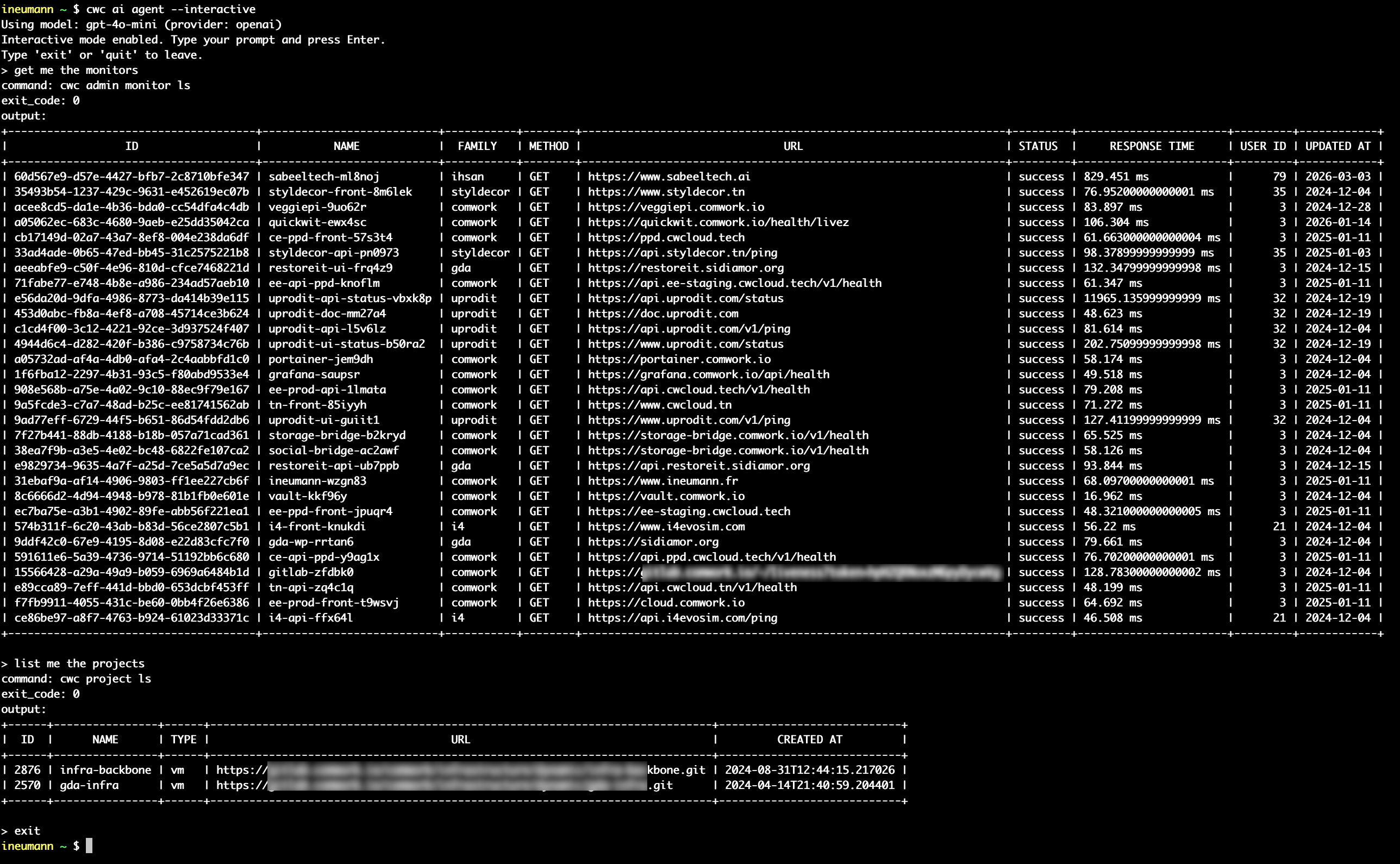

We also provide an interactive/REPL3 mode for the agent that allows execute multiple prompts in the same session (with -i or --interactive flag):

$ cwc ai agent -i

Using model: gpt-4o-mini (provider: openai)

Interactive mode enabled. Type your prompt and press Enter.

Type 'exit' or 'quit' to leave.

> get me the monitors

> list me all the available MCP tools

Demo:

We'll add many more features so stay tuned!